AI adoption is racing ahead—but security budgets are still chasing incidents. The IBM 2025 Cost of a Data Breach study pegs the average hit at $4.4 million. According to Bloomberg, Alphabet plans to acquire Wiz for $32 billion, shifting security from add-on to core platform feature. Amazon’s answer is Bedrock, which bundles always-on encryption, PrivateLink endpoints and automated abuse filters at no extra cost. In this guide, we stack-rank six cloud AI platforms so you can keep experiments fast and the attack surface small.

How we chose and scored the contenders

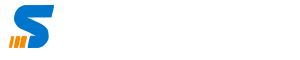

We started with the 10 vendors highlighted in Gartner’s 2024 Magic Quadrant for Cloud AI Developer Services.

Each platform then had to clear three non-negotiables:

- default encryption for all stored data

- native identity and access management

- at least one always-on security guardrail (no third-party plug-ins required)

That filter cut niche tools and bolt-on SaaS, leaving a field we could compare apples to apples.

How the initial cloud AI vendor pool was filtered and scored using strict security and NIST-aligned criteria.

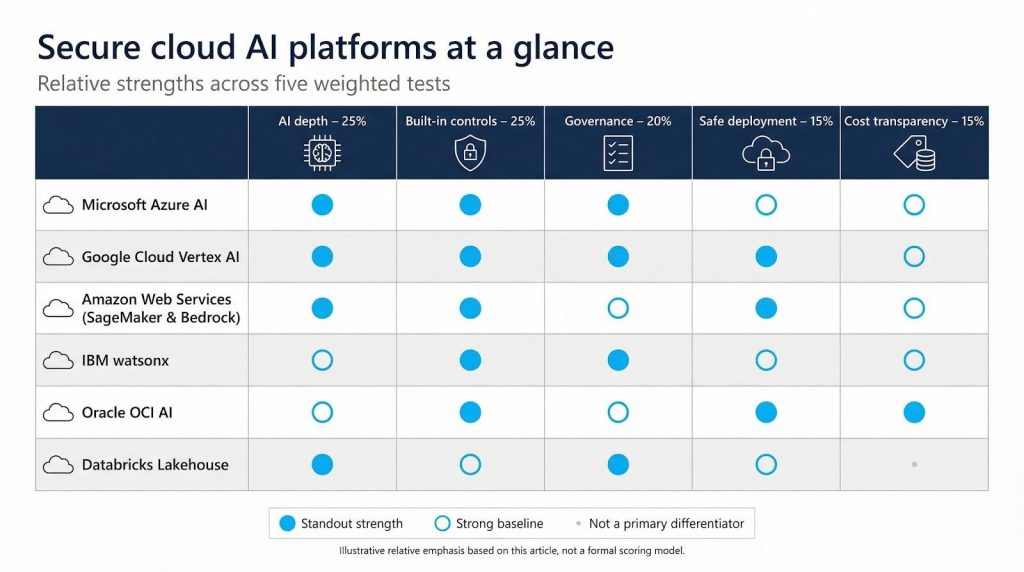

Our five weighted tests

- Depth of native AI capabilities (25 percent)

- A contender must cover the full ML life cycle—from data prep to monitored deployment—otherwise security features sit idle.

- Built-in security controls (25 percent)

- We rewarded always-active protections. Oracle’s Security Zones, for example, block any resource that tries to launch without encryption or with a public IP.

- Ease of safe deployment (15 percent)

- Higher scores went to platforms whose notebooks or endpoints default to private networks—no extra clicks needed.

- Governance and compliance (20 percent)

- Certifications such as ISO 27001, FedRAMP and HIPAA are table stakes; bonus points for policy engines or auto-collected evidence that shorten audits.

- Cost transparency (15 percent)

- Security shouldn’t carry a surprise premium. Vendors that bundle core defenses into the base rate outperformed those that sell threat detection à la carte.

Weighting outcome

Security depth and AI breadth together make up 50 percent of the score; governance, usability and cost split the rest. We validated the final tallies with four independent cloud-security practitioners to reduce bias.

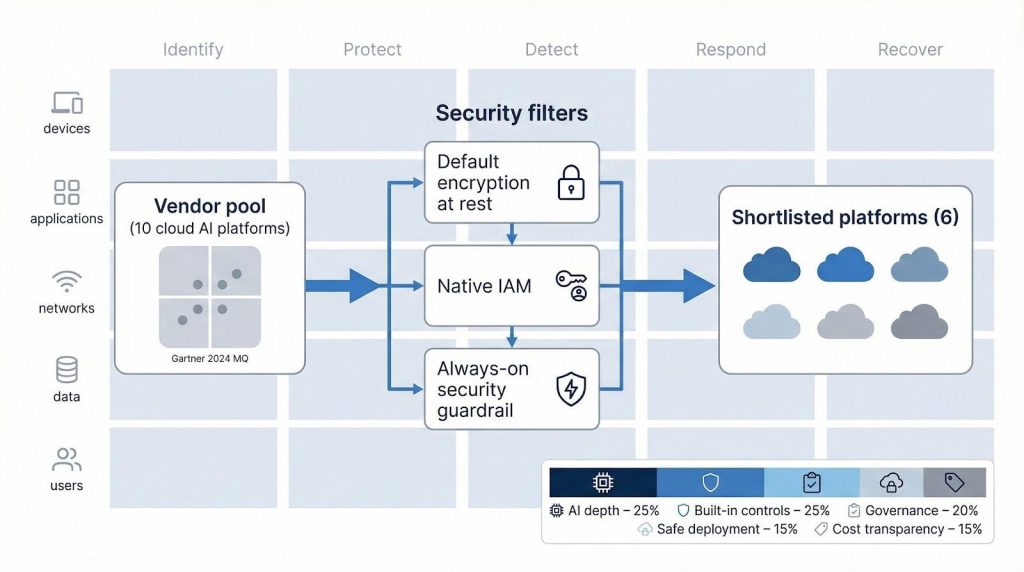

Teams that want to reproduce this kind of scoring model benefit from grounding it in a standard like the NIST Cybersecurity Framework instead of inventing categories from scratch.

TD SYNNEX guidance on zero trust shows how to use the Cyber Defense Matrix together with NIST Cybersecurity Framework 2.0 to map controls across functions such as Identify, Protect, Detect, Respond and Recover and across assets including devices, applications, networks, data and users, giving you a reusable grid for comparing how each cloud AI platform strengthens or leaves gaps in your existing defenses.

NIST Cybersecurity Framework 2.0 Functions Official Diagram.

The six platforms that cleared this bar—and why each stands out—follow next.

TD SYNNEX StreamOne Ion: provisioning to invoicing, kept in lockstep

Most people hear “StreamOne Ion” and think GPUs.

The early win is more boring than that: operational control.

StreamOne Ion is built as a cloud marketplace that can provision and manage multi-cloud + SaaS licensing with near real-time state across vendors. You can then expose that same catalog through a white-label storefront so customers can self-serve without your team living in ten portals.

Where it gets interesting is the plumbing.

StreamOne’s sync engine is designed for the reality that changes happen in multiple places. It reconciles what happens inside StreamOne and what happens directly in hyperscaler portals by polling vendors on a 30-minute cadence. When things drift anyway, the billing reconciliation layer is meant to flag inconsistencies early, before they turn into month-end invoice arguments.

Day to day, the product pushes an integration-first workflow.

Ion supports headless commerce. That means connectors into PSA tools like ConnectWise and Autotask, plus an API stack you can test in Swagger before you wire it into your own systems. ServiceNow is called out as “soon.”

The ConnectWise connector details are unusually concrete: near real-time sync into PSA, visibility into pricing and margin, tracking subscription changes, mapping wizards, proration rules, notifications, and audit reporting. In plain terms: it’s trying to keep SaaS + IaaS billing consistent without you building a bunch of glue code.

Security shows up in two layers: platform assurance and a repeatable way to think about coverage.

On the platform side, expect audit logs and visible sync logs in the integration layer. Apptium (the platform behind StreamOne Ion) reports SOC 2 Type 1 plus ISO/IEC 27001, 27017, and 27018—useful when enterprise customers ask for paperwork, not vibes.

On the program side, TD SYNNEX leans on its broader security catalog. Drawing on 100+ pre-built offerings across 50+ security vendors, their cybersecurity solutions include zero-trust guidance that maps the Cyber Defense Matrix alongside NIST CSF 2.0. The practical output is a reusable grid for mapping controls across Identify, Protect, Detect, Respond, and Recover—and across assets like devices, apps, networks, data, and users—so you can quickly see what’s covered, what’s redundant, and what’s missing as you expand into multi-cloud and AI.

Trade-off to plan for: Ion rewards teams that commit to the integration model. If your PSA mappings, pricebooks, and workflows stay messy, you’ll end up with one more portal and a new set of mismatches to chase.

Best fit: MSPs and resellers scaling multi-cloud + SaaS who want procurement → provisioning → reconciliation → invoicing to behave like one system. It’s especially relevant if you live inside ConnectWise or Autotask and you need invoices to reflect reality continuously, not during a month-end scramble.

Microsoft Azure AI: enterprise depth, security in the DNA

Spin up an Azure Machine Learning workspace and three safeguards start protecting you right away: AES-256 encryption at rest, Azure AD identity and network isolation inherited from the resource group. Private endpoints keep traffic off the public internet. Azure Policy blocks any attempt to assign a public IP or disable encryption; that guardrail follows Security Center recommendations.

Microsoft Azure AI Studio and Machine Learning Workspace Interface.

Day to day, Microsoft Defender for Cloud scans containers and VM images for vulnerabilities and feeds anomalies into Microsoft Sentinel for correlation. In a recent red-team drill the SOC received an alert within minutes after a compromised notebook tried to mine cryptocurrency. The quick signal showed how real-time monitoring shortens the gap between experiment and incident.

Governance rides along. Azure AI Studio stamps every dataset, model and pipeline with lineage metadata, so proving that a model trained only on GDPR-cleared data is a two-click task. Compliance officers also lean on Azure’s FedRAMP High boundary; 137 services are now covered in Azure Government regions.

Speed still matters. The ND H100 v5 VM series delivers clusters of up to thousands of NVIDIA H100 GPUs interconnected by 400 Gb/s InfiniBand, cutting large-model training time compared with the prior A100 generation.

Trade-off to plan for. Fine-tuning VNets, Key Vault keys and conditional access takes cloud expertise. Budget a landing-zone template on day one, so “just a quick exception” doesn’t unravel guardrails later.

Best fit. If your organization already runs on Microsoft 365 or needs FedRAMP High assurances, Azure delivers deep AI services and a broad security stack under one roof without bolting on extra tools.

Google Cloud Vertex AI: zero-trust roots, automated governance

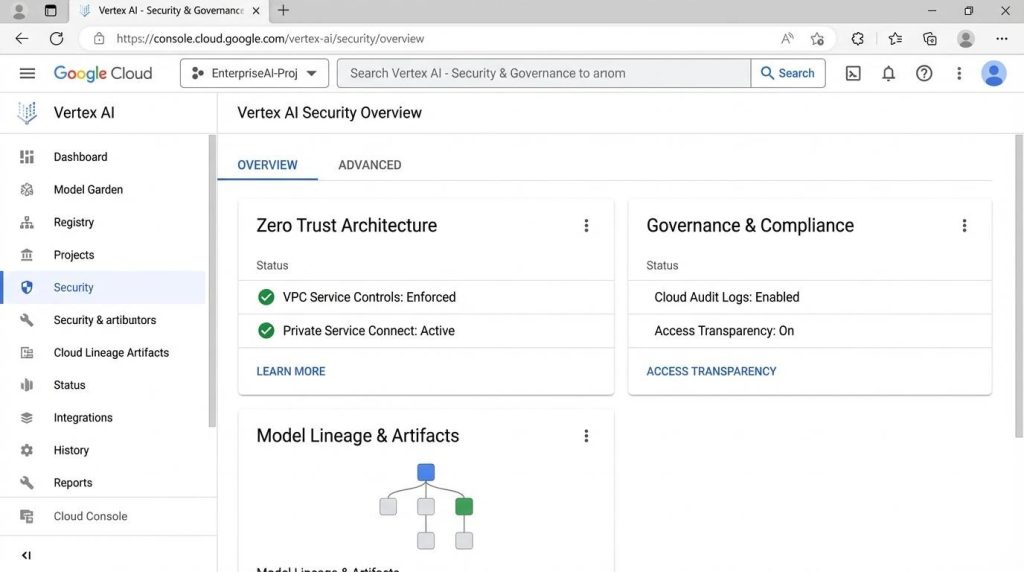

Every Vertex AI request travels on Google’s zero-trust backbone. IAM checks the caller, data stays encrypted in transit and at rest, and training jobs begin with outbound traffic blocked by default. Launch a Workbench notebook and the VM starts inside a private subnet; Cloud Logging captures each command for forensics.

Google Cloud Vertex AI Console with Zero-Trust and Governance Features.

Security Command Center (SCC) adds continuous posture checks. New Event Threat Detection rules flag issues such as a dormant service account calling Vertex AI APIs or an account exfiltrating data. Findings flow to Chronicle, so the SOC hunts AI and network threats from one console.

Governance sets Vertex apart. The Recommended AI Controls framework turns NIST or ISO requirements into a one-click assessment that auto-collects evidence across Vertex AI, IAM and Cloud Storage. Auditors leave with a PDF, and you keep coding.

Developers still need speed. A single TPU v4 pod links 4,096 chips at 6 Tbps, trimming large-model training time by about 2.1× versus TPU v3. Add VPC Service Controls and even stolen credentials can’t move data outside the perimeter.

Limits to note. Google added FedRAMP High for Vertex AI Search and Generative AI modules in March 2025, yet some related services remain in Moderate. Support tiers can feel thinner than Microsoft’s, so confirm 24/7 coverage if your board expects a hotline.

Best fit. Pick Vertex AI when you value zero-trust perimeters, automated audits and the latest TPUs without wiring your own security stack. You’ll iterate quickly, with paperwork ready when compliance knocks.

Amazon Web Services: guardrails for every layer of the stack

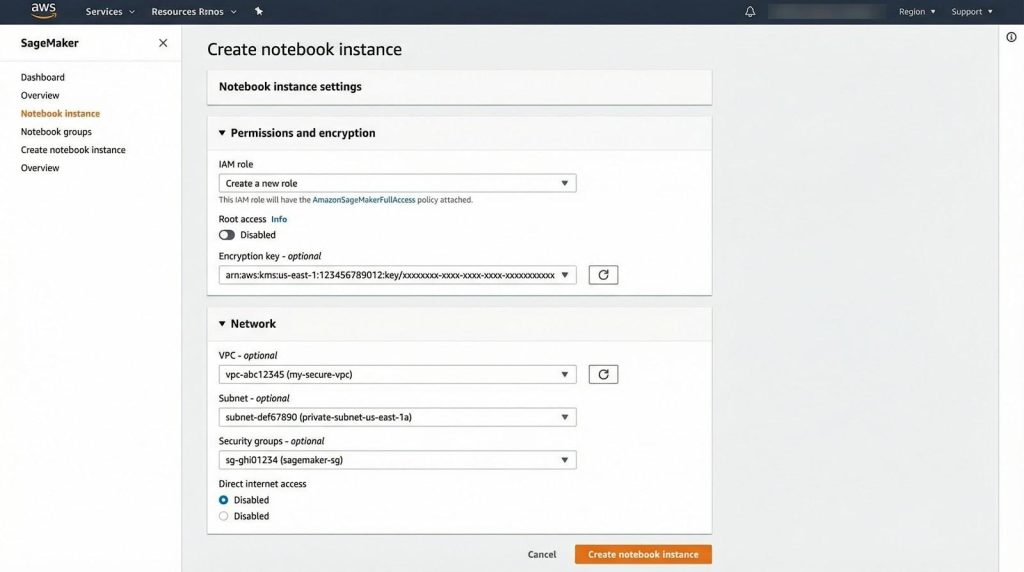

AWS calls security job zero, and SageMaker or Bedrock make that promise tangible.

When you spin up a SageMaker notebook, the wizard requires an IAM role scoped to only the services you need. Launch a training job and, unless you override it, the container runs in a private subnet; all data lands in S3 encrypted with an AWS-managed key or your own KMS key. Nothing leaves the VPC unless you allow it.

Amazon SageMaker Notebook and Security Configuration Overview.

Threat detection on autopilot. Amazon GuardDuty now analyzes SageMaker events, alerting if an execution role suddenly probes thousands of IP addresses, then sending findings to Security Hub for a single security view across EC2, Lambda and AI workloads.

GenAI-aware controls. Amazon Bedrock routes every prompt and response through automated abuse-detection filters and supports VPC connectivity through AWS PrivateLink endpoints, keeping traffic off the public internet.

Compliance without spreadsheets. AWS Artifact surfaces SOC 2 or PCI attestation letters on demand, while AWS Config managed rule SAGEMAKER_NOTEBOOK_NO_DIRECT_INTERNET_ACCESS enforces private notebooks across your organization.

Plan for the flip side

Flexibility means misconfigurations can still happen. Set up a well-architected landing zone, tag resources and enforce least privilege from day one to avoid security debt.

Best fit. Choose AWS when you need precise control, the broadest global region list or IL5-level authorizations for defense workloads—just bring a disciplined architecture to use the platform safely.

IBM watsonx: governance first, cloud or on-prem

Enter watsonx.ai and the first thing you see is an AI factsheet: an auto-generated dossier that logs dataset lineage, model parameters, approvals and risk scores. Compliance teams treat it as evidence, while developers experience it as background noise that never slows their work.

Data isolation by design. Store assets in watsonx.data and everything is encrypted with a customer-controlled key housed in an HSM that meets FIPS 140-2 Level 4, the highest commercial rating. Turn on Hyper Protect mode and the runtime executes inside a confidential-computing enclave, so even cloud administrators cannot view memory.

Continuous posture checks. IBM’s Security and Compliance Center benchmarks resources against more than a dozen frameworks, from ISO 27001 to PCI DSS. Drift appears as red tiles with one-click remediation, while watsonx.governance blocks promotion if a model hash differs from its approved artifact.

Hybrid in practice. Because watsonx ships on Red Hat OpenShift, the same stack runs in AWS (via ROSA), Azure or on-prem mainframes. IAM, encryption and audit logs stay consistent across locations. That portability satisfies data-sovereignty rules without fragmenting your toolchain.

Trade-offs. The product community is smaller than the hyperscalers’, so budget time for custom connectors or bring in IBM Consulting for turnkey delivery.

Best fit. Choose watsonx when regulators inspect every commit or when sensitive data must remain behind a firewall yet still feed modern AI. You will give up a little feature velocity for certified control and a paper trail that proves every inference stayed clean.

Oracle OCI AI: preventive security that refuses bad configs

Oracle built Security Zones so risky resources never launch. Mark a compartment as Maximum Security and any API call that tries to attach a public IP or create an unencrypted bucket is rejected at runtime. Cloud Guard then watches what the policy engine cannot block and is free for all tenants, covering misconfigurations, suspicious API calls and anomaly detection.

These guardrails let small teams operate like mature SecOps groups. Data scientists can launch GPU shapes, train models and deploy endpoints without touching firewall rules. If a setting drifts, Cloud Guard fixes it or opens a ticket.

One IAM umbrella. Point OCI Data Science at Autonomous Data Warehouse and train in place; traffic stays on Oracle’s backbone and keys remain in Oracle Vault (customer-managed or HSM-backed). The entire path from ERP dataset to inference call shares one audit trail.

Predictable pricing. Web Application Firewall, DDoS protection, Security Zones and Cloud Guard all cost $0, so CFOs see only pennies added to the bill, not percentage points.

Trade-offs

Third-party MLOps tooling still gravitates to AWS, Azure and Google Cloud. Plan extra wiring or lean on Oracle Consulting. Talent supply follows the same curve.

Best fit. Pick OCI when “can’t-misconfigure” safety nets, tight Oracle database coupling or DoD IL5 authorization requirements top your list. In OCI, a resource that threatens data simply never launches.

Databricks Lakehouse: open data, governed by default

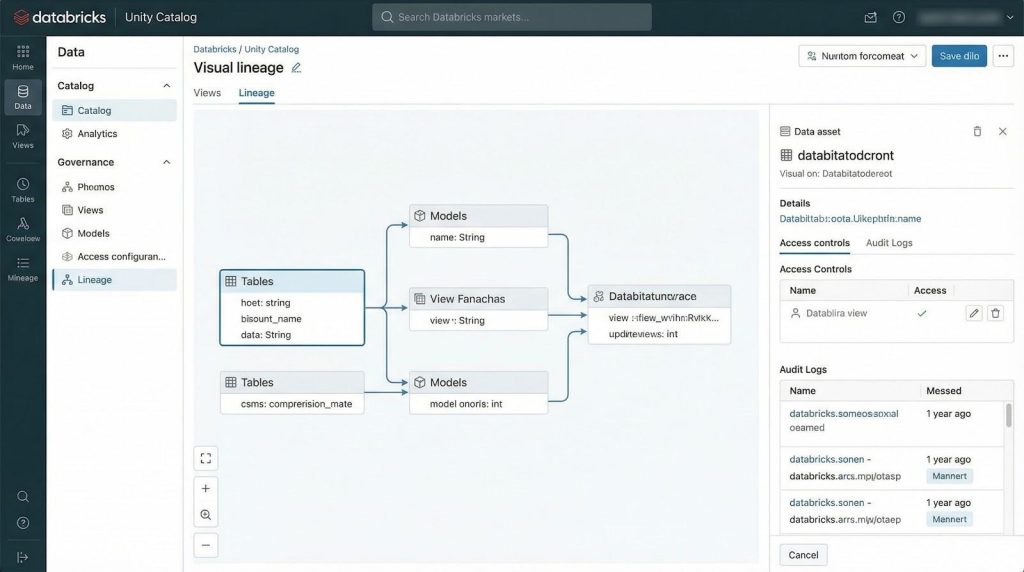

Many teams treat Databricks as their AI cockpit even when the raw compute runs on AWS, Azure or Google Cloud. The reason is Unity Catalog. Turn it on and every table, file, feature and model inherits fine-grained permissions plus full audit logs. Unity now captures lineage to the column level and keeps it for one year, so an admin can pinpoint exactly who touched column B of dataset X at 2:04 p.m.

Databricks Unity Catalog Data Lineage and Governance Interface.

Lineage covers the whole pipeline. Build with Delta Live Tables and Unity draws a graph from raw ingestion through transformations to the model-serving endpoint, so one glance answers the perennial “where did this come from?” compliance question.

Policy-guarded clusters. Compute policies let you pre-stamp safe templates: no public IPs, approved libraries only and auto-terminate after 60 minutes of idle time. Users pick a policy the way they pick a coffee size; they never deal with the espresso machine’s wiring.

Because Databricks runs in your cloud account, it inherits each provider’s encryption, PrivateLink and threat analytics. Want GuardDuty alerts on odd network spikes from a Spark worker? You get them. Prefer Azure Sentinel? Same story. Databricks stitches those findings to data-lineage context so responders see the full narrative.

Openness is the trump card. Everything rests on standards—Parquet, Delta Lake, MLflow, Spark—so if you migrate clouds or move on-prem (Databricks on Kubernetes), Unity Catalog and its lineage tags travel with you.

Watch-outs

- Shared responsibility. You secure storage buckets and VNets; Databricks secures the control plane. Document the split.

- Cluster sprawl. Idle GPUs burn budget. Enforce auto-stop and tag spend ceilings early.

Best fit. Choose Databricks when multi-cloud flexibility and end-to-end lineage matter more than single-vendor convenience. You’ll get an open Lakehouse that protects data as carefully as it processes it, wherever the bits live.

Conclusion

Security and speed no longer have to be trade-offs. Each of these six cloud AI platforms we reviewed integrates core defenses—from encryption and private networking to automated compliance—in ways that let data scientists innovate quickly while keeping auditors, regulators and customers confident that sensitive information stays protected.

Summary view comparing six cloud AI platforms across AI depth, built-in security, governance, deployment safety, and cost transparency.