The Legitimate Claimant Flagged as Fraud

The financial recovery claim is filed by a legitimate user after attentively uploading documents and confirming their identities. The claim is stopped a few minutes later. There is a fraud detection system that has raised a red flag on the submission. In terms of machine learning, the system was functional. Trust is immediately destroyed in the eyes of the user.

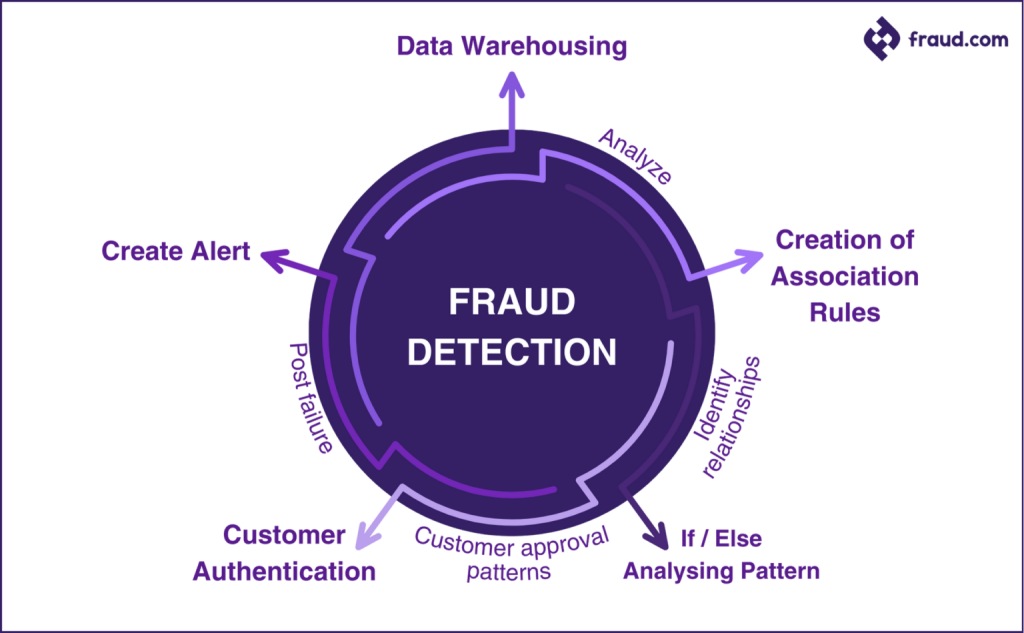

Anomaly detection workflow illustrating how fraud analysis cycles identify suspicious patterns while balancing automated alerts with customer verification and trust.

This situation describes the essence of fraud prevention in the present day. Financial claims systems need to be vigorous in terms of uncovering fraud as a way of safeguarding the people’s funds, but on the flip side, extremely sensitive models will discourage the genuine user due to false positives. The price of the failure to do a fraud attempt is financial, whereas it is psychological the price of a false accusation of the genuine user. This paper is an inquiry into the functioning of anomaly detection and fraud classification models within financial claims settings and the necessity to strike a balance between technical accuracy and human experience in order to maintain sustainable fraud prevention.

The Fraud Landscape in Financial Claims

Financial claims systems are good targets of fraud because of the lack of coherent records, late ownership verification and large payouts. Stealing of identity is a normal practice and attackers use the stolen personal information to impersonate the legitimate claimants. Opportunistic fraud entails those who claim to have assets that are similar to their identity yet those of another person.

More advanced are synthetic identities, in which both real and fictitious data are used to evade detection and document forgery through the use of fake identification, utility bills or certificates. Organized rings of fraudsters are trying to use various government claims systems, and insider fraud is committed by authorized staff members who are abusing their access.

There is always industry research that indicates that unclaimed or dormant financial assets record disproportionately high levels of attempted fraud as opposed to active accounts. This fact leads to a motivation of automated detection, and it pressures models to make firm decisions in the face of uncertainty.

Supervised Learning for Fraud Classification

Supervised fraud identification is based on the labeled past data, which consists of fraud incidents and valid claims. Patterns learned by models include amounts of claims, document attributes, time of submission and user behaviour. The typical algorithms consist of random forests, gradient boosting machines, and deep neural networks.

One of the obstacles is the imbalance of classes. There are very few cases of fraud compared to valid claims, and this may bias the model to overconfidence. Consequently, probability scoring is used instead of binary decisions where the thresholds can be dynamically adjusted.

The model evaluation focuses on the false positive rates and recall. ROC curves are useful to visualize the trade-offs whereas in highly unbalanced environments precision-recall analysis is usually more relevant. In financial claims, even minor rises in false positives can cause great dissatisfaction in users, and therefore threshold choice is a design choice, and not a statistical choice.

Unsupervised Anomaly Detection Techniques

Managed models find it difficult to handle new fraud trends that are not in line with past records. This gap is filled by unsupervised anomaly detection which learns what normal behavior is supposed to look like and issues warnings about deviations. Isolation forests single out claims that can be set apart by the majority. Local Outlier Factor identifies contextual exceptions that are not similar to the cases around.

Autoencoders are trained to learn the compressed state of valid claims and classify claims with high reconstruction error. Clustering methods reveal strange associates of characteristics whereas one-class SVMs are concerned with discovering novelty. Ensemble methods take an aggregate of several scores of anomalies in order to enhance robustness.

The ensemble techniques of production fraud detection such as those used by Claim Notify, are the implementation of supervised fraud models in conjunction with unsupervised anomaly detection methods to uncover the presence of known fraud and unusual suspicious activities in the process of detecting fraud without the need to compromise the trust of the user with high false positive rates. This stratification is becoming a common practice in a high-risk financial setting.

Behavioral Analytics and Risk Scoring

In addition to the attribute of claims that is not dynamic, behavioral analytics offer dynamic signals. Device fingerprinting helps detect repeated attempts on accounts. Strange access frequency is seen in IP reputation and consistency in geolocation. Behavioral biometrics is the analysis of typing speed, movement of the cursor, and timing of interaction.

Time signals are also important. The timings of claims made during odd hours or within high velocity can be an indication of automation or coordination. Network analysis reveals common devices, addresses or documents on various claims. These indicators are not considered separately; they are combined into high-risk scores.

The issue of privacy is essential. Monitoring of behavior should be rational, open, and in accordance with the data protection requirements. When used in an appropriate manner, but communicated poorly, surveillance produces, rather than builds trust.

The Psychological Cost of False Positives

False positives are not neutral errors. A label of being a fraud is frustrating, shameful, and angering. Users tend to think it is a form of accusation as opposed to a form of verification. When there are slow or opaque processes of appeal, there is a dramatic rise in abandonment rates.

Once erosion of trust is experienced, it is hard to undo. Service recoveries have demonstrated that following unfair accusations of users, retention decreases irrespective of how the problem is addressed. The damage is further reinforced by word of mouth where the users post negative experiences publicly.

False positives are also more prevalent in edge-case users whose data is atypical, including people with different name variants, address changes or irregular documentations. Fraud systems do not take proper measures and end up punishing the people they are intended to benefit.

Designing Human-Centered Fraud Detection

Fraud detection designed to be human-oriented considers false positivity to be considered design failure rather than collateral damage. Tiered forms of review utilize automation to direct low-risk cases and increase the number of unclear cases that are directed to manual reviews. Verification and suspicion should be clearly differentiated through communication.

Quick appeals ease the strain on honest users. Transparency regarding the trigger of the reviews assists the users in knowing what occurred without revealing exploitable information. Graded responses are taken to make sure that any small unusual cases are dealt with extra checks instead of being blocked out.

The high-stakes decisions must be supervised by humans. During verification, great care is taken so as not to damage dignity or trust. The second-chance mechanisms enable users to be built back up in no time after missing flags. Through explainability, both the reviewers and the users can comprehend the decisions of the models and this promotes fairness and accountability.

Building Fraud Protection That Doesn’t Harm Users

Fraud detection is not only a machine learning issue. It is a human trust problem. The optimization systems that focus only on the fraction of frauds detected are likely to cause long-term damage due to false positives and loss of user trust.

Interpretable models, layers of anomaly, and psychologically informed design are the way forward for financial claims security. The system of fraud prevention will be secure and sustainable when it does not violate the users, yet secures the money. The aim is not to eliminate fraud by all means, but smart protection that protects the assets without considering the people who use it as an enemy.